ハテナの箱をたたくと、「かきくけこ」が音と一緒にでてくるようなiPhoneアプリを描いてみます。

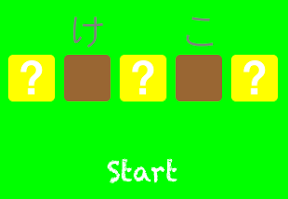

動作イメージ

XcodeからiOS7 iPhone Simulatorで動かすとこんな感じになります。

サンプルコード

#import “ViewController.h”

#import <AVFoundation/AVFoundation.h>

@interface ViewController ()

@property (strong, nonatomic) NSMutableArray *words;

@property (strong, nonatomic) AVAudioPlayer *mySound;

@end

@implementation ViewController

– (void)viewDidLoad

{

[super viewDidLoad];

self.view.backgroundColor = [UIColor greenColor];

[self createKakikukeko];

[self createHatena];

[self createStartBtn];

}

– (void)createKakikukeko

{

self.words = [[NSMutableArray alloc] init];

NSArray *arr = @[@”か“, @”き“, @”く“, @”け“, @”こ“];

for (NSString *s in arr) {

UILabel *l = [[UILabel alloc] initWithFrame:CGRectMake(0, 0, 50, 50)];

l.text = s;

l.textColor = [UIColor grayColor];

l.font = [UIFont systemFontOfSize:40];

l.textAlignment = NSTextAlignmentCenter;

[self.words addObject:l];

}

}

– (void)createHatena

{

for (int i=0; i<5; i++) {

float x = i * 60 + 15;

float y = 300;

UILabel *hatena = [[UILabel alloc] initWithFrame:CGRectMake(x, y, 50, 50)];

hatena.layer.cornerRadius = 5;

hatena.backgroundColor = [UIColor yellowColor];

[self.view addSubview:hatena];

hatena.text = @”?”;

hatena.textColor = [UIColor whiteColor];

hatena.font = [UIFont boldSystemFontOfSize:50];

hatena.textAlignment = NSTextAlignmentCenter;

hatena.userInteractionEnabled = YES;

UITapGestureRecognizer *tap = [[UITapGestureRecognizer alloc] initWithTarget:self action:@selector(open:)];

[hatena addGestureRecognizer:tap];

}

}

– (void)open:(UITapGestureRecognizer*)gr

{

gr.view.userInteractionEnabled = NO;

[UIView animateWithDuration:0.2 animations:^{

CGAffineTransform transform = CGAffineTransformMakeScale(1.1, 1.1);

gr.view.transform = CGAffineTransformTranslate(transform, 0, –5);

} completion:^(BOOL finished) {

gr.view.transform = CGAffineTransformIdentity;

int target = arc4random() % [self.words count];

UILabel *l = [self.words objectAtIndex:target];

l.center = CGPointMake(gr.view.center.x, gr.view.center.y);

[self.view insertSubview:l belowSubview:gr.view];

// sound

[self sound:l.text];

[UIView animateWithDuration:0.5 animations:^{

l.center = CGPointMake(gr.view.center.x, gr.view.center.y–50);

} completion:^(BOOL finished) {

gr.view.backgroundColor = [UIColor brownColor];

[(UILabel*)gr.view setText:@””];

}];

[self.words removeObject:l];

}];

}

– (void)createStartBtn

{

UIButton *start = [UIButton buttonWithType:UIButtonTypeCustom];

[start setTitle:@”Start” forState:UIControlStateNormal];

start.titleLabel.font = [UIFont fontWithName:@”Chalkduster” size:30];

start.frame = CGRectMake(50, 400, 220, 50);

[self.view addSubview:start];

[start addTarget:self action:@selector(restart) forControlEvents:UIControlEventTouchUpInside];

}

– (void)restart

{

for (UIView *v in self.view.subviews) {

[UIView animateWithDuration:0.3 animations:^{

v.transform = CGAffineTransformMakeTranslation(0, –500);

} completion:^(BOOL finished) {

[v removeFromSuperview];

}];

}

[self performSelector:@selector(viewDidLoad) withObject:Nil afterDelay:0.5];

}

– (void)sound:(NSString*)s

{

NSArray *kakiku = @[@”か“, @”き“, @”く“, @”け“, @”こ“];

int i = [kakiku indexOfObject:s];

NSArray *names = @[@”ka”,@”ki”,@”ku”,@”ke”,@”ko”];

NSString *fileName = [names objectAtIndex:i];

NSURL* musicFile = [NSURL fileURLWithPath:[[NSBundle mainBundle] pathForResource:fileName ofType:@”m4a”]];

self.mySound = [[AVAudioPlayer alloc] initWithContentsOfURL:musicFile error:nil];

self.mySound.volume = 0.5;

[self.mySound setVolume:1.0];

[self.mySound play];

}

– (void)didReceiveMemoryWarning

{

[super didReceiveMemoryWarning];

// Dispose of any resources that can be recreated.

}

@end